An Onyx alternative

you don't have to host yourself

A virtual version of your business

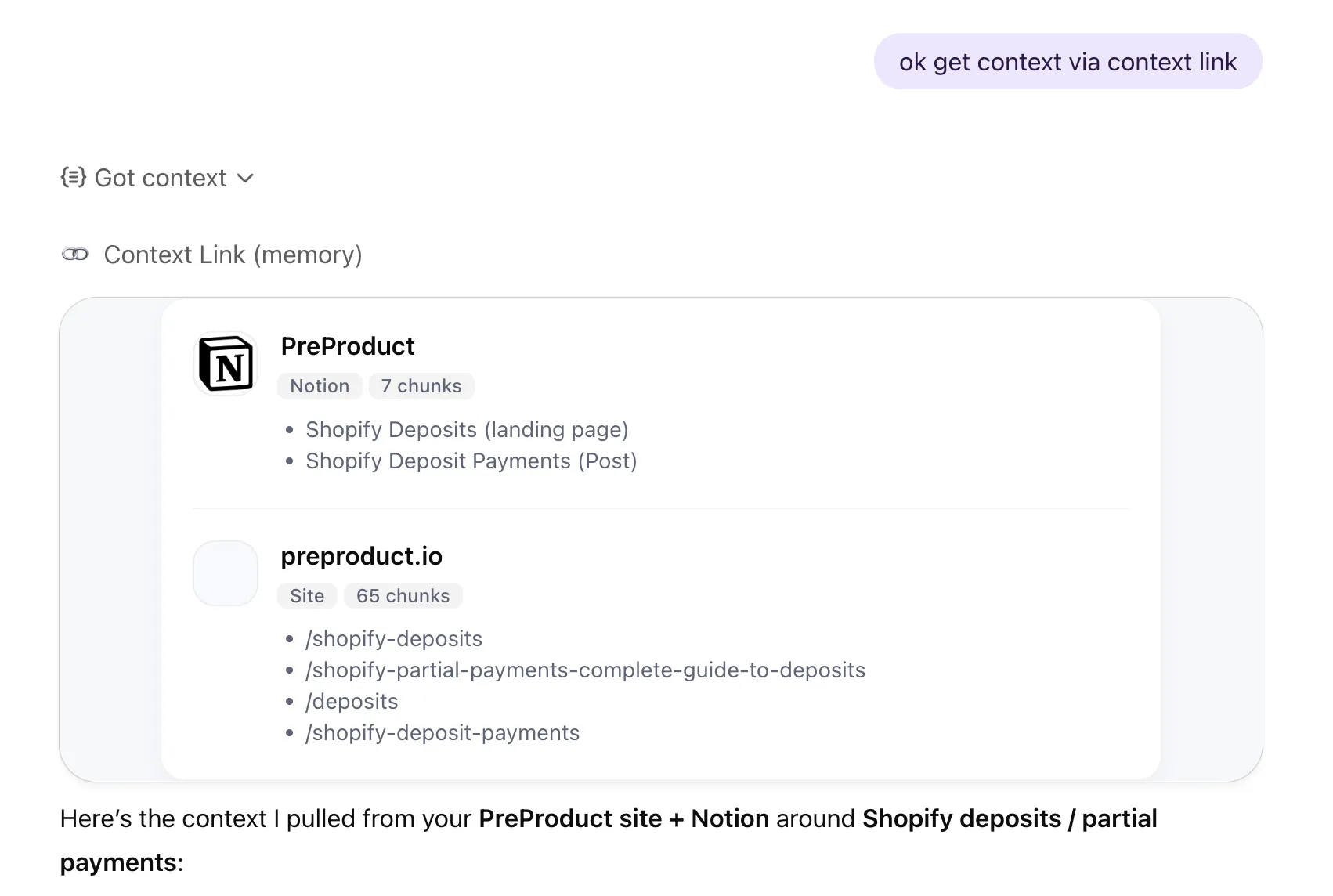

Onyx is open-source enterprise search you deploy on your own infrastructure: Docker, Vespa, PostgreSQL, Redis, and your own LLM keys. Context Link is a virtual version of your business for the AI era. Connect your sources, ask source-backed questions from inside ChatGPT, Claude, Gemini, or any MCP-aware agent, and observe Lenses on positioning, competitors, customer support pulse, and business activity. Kept current by recurring AI deep research, with Suggested Actions to move things forward. No servers, no DevOps, no infrastructure to babysit.

Key Differences

Onyx is self-hosted enterprise search that needs Docker, 12+ CPU cores, 24 GB RAM, and ongoing DevOps to maintain

Context Link is a fully managed virtual version of your business: Connect, Ask, Observe, Evolve. No servers, no containers, no infrastructure to break at 2 a.m.

Onyx stops at search inside its own chat UI. Context Link adds analytical Lenses, recurring AI deep research, and Suggested Actions on top of retrieval

Lenses on positioning, competitors, customer support pulse, and business activity surface what's true right now, what changed, and what needs attention

Reach Context Link from inside ChatGPT, Claude, Gemini, and any MCP-aware agent. Onyx asks your team to log into its own chat UI

Onyx requires you to bring your own LLM API keys. Context Link bundles the AI deep research and answer composition into the service

Use Your Existing AI

With Your Existing Knowledge Sources

Which Should You Choose?

Onyx and Context Link both give AI access to your company knowledge. They take fundamentally different approaches to getting there.

Choose Onyx If...

-

You have a developer or DevOps engineer who can deploy and maintain a multi-container Docker stack

-

Data sovereignty is non-negotiable -- you need everything on your own servers, no exceptions

-

You want to customize the search pipeline, fork the code, or build on top of the platform

-

You need enterprise connectors like Salesforce, Jira, Confluence, or ServiceNow

-

You're comfortable managing LLM API keys, model servers, and infrastructure costs separately

Choose Context Link If...

-

You're a founder or owner-operator running a small business of 3-200 that uses AI every day and doesn't have DevOps capacity

-

You want a virtual version of your business: Lenses on positioning, competitors, customer support pulse, and business activity that surface what's true right now, what changed, and what needs attention

-

You want recurring AI deep research keeping that picture current, plus Suggested Actions when something shifts

-

You want AI to reach Context Link from inside ChatGPT, Claude, Gemini, or any MCP-aware agent, with no new chat surface to adopt

-

You need to connect Notion, Google Docs, Google Drive, email (Gmail, Outlook, Zoho, Fastmail, IMAP), Basecamp, Monday.com, websites, or uploaded files

-

You want to be set up today, not after a week of Docker troubleshooting

Feature Comparison

|

Capability

|

|

|

|---|---|---|

| Where you work | Onyx's own chat UI (or Slack integration) | From inside ChatGPT, Claude, Gemini, and any MCP-aware agent, plus a dashboard for humans observing Lenses and choosing goals |

| Core promise | Self-hosted enterprise search over your connected sources | A virtual version of your business: Connect sources, Ask source-backed questions, Observe Lenses, Evolve through Suggested Actions |

| AI model | Bring your own. OpenAI, Anthropic, or self-hosted, you manage API keys and costs | Model-agnostic on the asking side. Recurring AI deep research and answer composition are bundled into the service |

| Connectors | 48 connectors, enterprise-focused (Confluence, Jira, Salesforce, Slack, Google Drive, etc.) | Notion, Google Docs, Google Drive, email (Gmail, Outlook, Zoho, Fastmail, IMAP), Basecamp, Monday.com, any website, uploaded files (PDFs, Word, Markdown) |

| Setup time | Hours to days (Docker deployment, OAuth config, LLM setup, infrastructure provisioning) | Minutes (self-serve, no IT needed) |

| Pricing | Free self-hosted (but you pay for infrastructure ~$150+/mo + LLM API costs) or $20/user/mo cloud + LLM costs | SMB-friendly per-seat pricing, all-inclusive, no hidden infrastructure costs |

| Team size | Built for enterprise teams with IT support | Built for founders and small businesses of 3-200 |

| Lenses (analytical views) | No equivalent. Onyx is a search box, not a business-state surface | Source-backed Lenses on positioning, competitors, customer support pulse, and business activity. Each one surfaces what's true right now, what changed, and what needs attention, with snapshot history |

| Recurring AI deep research | Not part of the product. Onyx searches what you've indexed, it doesn't go out and research the world for you | Each Lens re-runs deep research on a schedule, feeding the model fresh external evidence (competitor moves, market shifts, what's been written about your space) |

| Suggested Actions | No equivalent. Onyx stops at retrieval | Pick a goal for a Lens. Context Link briefs an AI on your sources, your Lens, and the latest deep research, then turns the goal into concrete next moves with linked evidence |

| Direct question answering (Q&A) | Onyx Q&A inside Onyx's web app / Slack bot | Ask Question skill invoked from inside ChatGPT or Claude: `/ask-question [question]` or "ask Context Link what …". Returns one grounded paragraph with numbered citations. Secondary to the primary `get context on [topic]` workflow |

| Memories (secondary) | No equivalent. Read-only search over existing documents | AI-pushed namespaced documents under /slash routes for canonical facts and brand voice. A quiet supporting feature, not the headline |

| Best for | Teams with DevOps capacity who need full control over their search infrastructure | Giving your business a virtual version AI can ask, observe, and help evolve, without running servers |

The Real Differentiation

Onyx

Onyx is a genuine open-source RAG platform with serious engineering behind it. It connects to 48 enterprise tools, supports any LLM, and gives you full control over your data. But 'full control' comes with real costs: you need Docker, PostgreSQL, Vespa, Redis, model servers, and someone to keep it all running. The baseline deployment needs 12 CPU cores and 24 GB of RAM. Users have reported Vespa consuming 42 GB on a 64 GB machine. And once you've stood it up, the product is still essentially a search box: it indexes your sources and lets you query them. There's no recurring deep research, no business-state Lenses, no closed-loop way to act on what you find.

Context Link

Context Link is a virtual version of your business for the AI era. Connect the places work happens (Notion, Google Docs, Google Drive, email, Basecamp, Monday.com, websites, uploaded files) and Context Link turns them into a living business state. Ask source-backed questions from inside ChatGPT, Claude, Gemini, or any MCP-aware agent. Observe Lenses on positioning, competitors, customer support pulse, and business activity, kept current by recurring AI deep research, surfacing what's true right now, what changed, and what needs attention. Pick a goal for a Lens and work through Suggested Actions to move things forward. Under the hood it works like an AI knowledge base and managed RAG workspace, but the promise is bigger than search, and you don't have to run any of it.

Onyx gives you a search box and infrastructure to run. Context Link gives you a virtual version of your business, fully managed.

Meet your team where they already work

Your team stays on the best AI tools for them — ChatGPT, Claude, Gemini, Copilot. Context Link upgrades every conversation with your company's actual knowledge. Easy adoption, zero workflow disruption.

(ChatGPT)

(Claude)

agent 004

session

What Onyx Does Well

What Onyx Does Well

Onyx is a real product built by a strong team (YC W24, backed by Khosla Ventures and First Round Capital, used by Netflix and Ramp). If you have the infrastructure capacity, these strengths genuinely matter.

Genuinely open source

The Community Edition is MIT-licensed. You can read every line of code, fork it, modify it, and run it entirely on your own servers. For teams with strict open-source requirements or regulatory constraints, this transparency is valuable.

Full data sovereignty

Self-hosted means your data never leaves your infrastructure. For defense contractors, financial services, healthcare, or anyone with strict data residency requirements, this is a real differentiator.

Model agnostic

Onyx works with OpenAI, Anthropic, Google, or self-hosted models via Ollama and vLLM. No vendor lock-in on the AI model layer -- you pick what works for your use case and budget.

48 enterprise connectors

Confluence, Jira, Salesforce, Slack, Google Drive, SharePoint, Zendesk, HubSpot, and many more. If your knowledge lives in enterprise tools, Onyx probably has a connector for it.

Permission-aware search

Onyx inherits access controls from your source systems. Users only see documents they're authorized to see -- important for organisations where not everyone should access everything.

What Context Link Does Differently

What Context Link Does Differently

A virtual version of your business, not just a search box

Onyx indexes and retrieves. Context Link adds analytical Lenses on top of retrieval, so you don't just get answers, you get a continuous read on positioning, competitors, customer support pulse, and business activity.

Lenses, not generic dashboards

Each Lens is a source-backed analytical view from one angle. Indicators run on a schedule, snapshots show how things shifted, and every result is backed by retrievable evidence in the right column.

Recurring AI deep research

Lenses don't only read your docs. They re-run deep research on a schedule, feeding the model fresh external evidence so the picture keeps up with what's happening outside your walls. No manual refresh, no static snapshot.

Suggested Actions close the loop

Pick a goal for a Lens (go upmarket, tighten our ICP, respond to a competitor launch). Context Link briefs an AI on your sources, your Lens, and the latest deep research, then turns the goal into concrete next moves with linked evidence.

No infrastructure to manage

Context Link is fully managed. No Docker, no Vespa, no PostgreSQL, no Redis, no model servers. Indexing, chunking, embeddings, retrieval, and recurring deep research are all handled for you.

Reach Context Link from inside the AI you already use

Ask from inside ChatGPT, Claude, Gemini, or any MCP-aware agent. Onyx asks your team to log into its own chat UI. Context Link plugs into the tools they're already in.

Connect the sources small businesses actually use

Notion, Google Docs, Google Drive, email (Gmail, Outlook, Zoho, Fastmail, custom domains via IMAP), Basecamp, Monday.com, any website via sitemap, uploaded files (PDFs, Word docs, Markdown). Onyx's connectors skew enterprise (Confluence, Jira, Salesforce). Context Link connects to the tools small businesses run on.

Minutes to value

Connect your sources and start retrieving context the same day. No Docker troubleshooting, no OAuth configuration debugging, no waiting for Vespa to finish indexing. One person can set this up on a Tuesday afternoon.

SMB-friendly pricing

Built for founders and small businesses of 3-200. No infrastructure costs to estimate, no LLM API bills to track separately, no 'free but actually $150/month in cloud compute' surprises.

Frequently Asked Questions

Is Context Link a direct replacement for Onyx?

Onyx is free and open source. Why would I pay for Context Link?

What about Onyx's cloud plan at $20/user/month?

What is a Lens?

What is recurring AI deep research?

What are Suggested Actions?

Can I use Context Link with ChatGPT AND Claude?

Onyx has 48 connectors. Does Context Link have enough?

How long does it take to set up?

What about data sovereignty? Onyx lets me keep data on my own servers.

Does Context Link have an 'Ask' feature like Onyx?

The Bottom Line

Onyx

Onyx is a serious open-source RAG platform backed by YC, Khosla Ventures, and First Round Capital. If you have a developer who can manage Docker deployments, need full data sovereignty, or want to customize the search pipeline at the code level, Onyx gives you that control.

Context Link

Context Link is a virtual version of your business for the AI era, fully managed. Connect your sources once, then ask source-backed questions from inside ChatGPT, Claude, or any MCP-aware agent, observe Lenses on positioning, competitors, customer support pulse, and business activity (kept current by recurring AI deep research), and pick a goal to work through Suggested Actions that move things forward. No servers, no API keys, no infrastructure to babysit.

Quick Decision Guide

I need full data sovereignty and the ability to customize the RAG pipeline at the code level

Onyx is the right choice

I want a virtual version of my business with Lenses on positioning, competitors, support, and activity

Context Link is the right choice

I want recurring AI deep research and Suggested Actions, not just enterprise search

Context Link is the right choice

I don't have a developer to maintain Docker containers, Vespa, and model servers

Context Link is the right choice

Give Your Business a Virtual Version AI Can Talk to

Context

- Search all your sources by meaning, not keywords

- Works with ChatGPT, Claude, Copilot & Gemini

- Connect Google Drive, Notion, files, email, websites & more

- Save AI outputs as reusable memories under any /slash

- Use /get-context for bigger briefings, or /ask-question for concise answers with citations

- Connections auto re-sync every 24 hours

Context + Lenses

- Living Lenses on positioning, competitors, customer support and more

- Backed by recurring AI deep research and your live-updating context

- Pick a goal in any Lens — AI suggests actions to move your business there

- Lens history shows how your business shifts over time

No credit card required. No servers to provision. No Docker needed.